AMD Ryzen 9 7950X

|

|

Benchmark |

Score |

Overclocker |

Motherboard |

Memory |

|

| GFP |

7-ZIP |

16xCPU |

291498 |

|

OGS |

ASUS X670E Gene |

G.Skill |

|

| GFP |

Cinebench - R11.5 |

16xCPU |

88.87 |

|

OGS |

ASUS X670E Gene |

G.Skill |

|

| GFP |

Cinebench - R15 |

16xCPU |

8100 |

|

Sergmann |

Gigabyte X670E Aorus |

G.Skill |

|

| GFP |

Cinebench - R20 |

16xCPU |

20049 |

|

OGS |

ASUS X670E Gene |

G.Skill |

|

| GFP |

Cinebench - R23 |

16xCPU |

50834 |

|

Safedisk |

ASUS X670e Gene |

G.Skill

|

|

| GFP |

Geekbench 3 Multi Core |

16xCPU |

143649 |

|

Splave |

ASRock X670E Taichi |

G.Skill

|

|

|

Geekbench 4 Multi Core |

16xCPU |

98607 |

|

Domdtxdissar |

ASUS X670E Hero |

Corsair

|

|

|

Geekbench 5 Multi Core |

16xCPU |

26943 |

|

Domdtxdissar |

ASUS X670E Gene |

Corsair

|

|

| GFP |

HWBot X265 1080P |

16xCPU |

250.436 |

|

Lucky NOOb |

MSI MEG X670E Ace |

G.Skill

|

|

| GFP |

HWBot X265 4K |

16xCPU |

65.594 |

|

Splave |

ASRock X670E Taichi |

G.Skill

|

|

| GFP |

GPUPI for CPU 1B |

16xCPU |

23sec 299 |

|

Splave |

ASRock X670E Taichi |

G.Skill

|

|

| GFP |

Wprime 1024 |

16xCPU |

20sec 689 |

|

Sergmann |

Gigabyte X670E AORUS |

G.Skill

|

|

|

Wprime 32 |

16xCPU |

1sec 183 |

|

Domdtxdissar |

ASUS X670E Hero |

Corsair

|

|

|

Y-Cruncher - Pi - 1B |

16xCPU |

15sec476 |

|

Sergmann |

Gigabyte X670E Extreme |

Gigabyte

|

|

| GFP |

Y-Cruncher - Pi - 2.5B |

16xCPU |

45sec 035 |

|

Domdtxdissar |

ASUS X670E Hero |

Corsair

|

|

| GFP |

Y-Cruncher - Pi - 10B |

16xCPU |

221sec 670 |

|

Skullbringer |

ASRock X670E Phantom |

Team Group

|

|

| (Table as of October 13, 2022. Source: hwbot.org database) |

AMD Ryzen 5 7600X

|

|

Benchmark |

Score |

Overclocker |

Motherboard |

Memory |

|

|

Cinebench - R15 |

6xCPU |

2837 |

|

Jimshown |

ASUS X670E Gene |

ADATA |

|

|

Cinebench - R20 |

6xCPU |

7448 |

|

Alex@Ro |

ASUS X670E Gene |

G.Skill |

|

|

Cinebench - R23 Multicore |

6cCPU |

17893 |

|

Zippytek |

ASUS X670E Gene |

Corsair

|

|

|

Geekbench 3 Multi Core |

6xCPU |

57075 |

|

Alex@Ro |

ASUS X670E Gene |

G.Skill

|

|

|

Geekbench 4 Multi Core |

6xCPU |

46892 |

|

Zippytek |

ASUS X670E Gene |

Corsair

|

|

|

Geekbench 5 Multi Core |

6xCPU |

13343 |

|

Zippytek |

ASUS X670E Gene |

Corsair

|

|

|

HWBOT X265 - 4K |

6xCPU |

23.665 |

|

Jimshown |

ASUS X670E Gene |

Adata

|

|

|

HWBOT X265 1080P |

6xCPU |

105.884 |

|

Zippytek |

ASUS X670E Gene |

Corsair

|

|

| GFP |

GPUPI for CPU 1B |

6xCPU |

59sec 184 |

|

Safedisk |

ASUS X670E Gene |

G.Skill

|

|

|

Y-Cruncher - Pi - 1B |

6xCPU |

26sec626 |

|

Zippytek |

ASUS X670E Gene |

Corsair

|

|

|

Y-Cruncher - Pi - 2.5B |

6xCPU |

1min15 |

|

Zippytek |

ASUS X670E Gene |

Corsair

|

|

| (Table as of October 13, 2022. Source: hwbot.org database) |

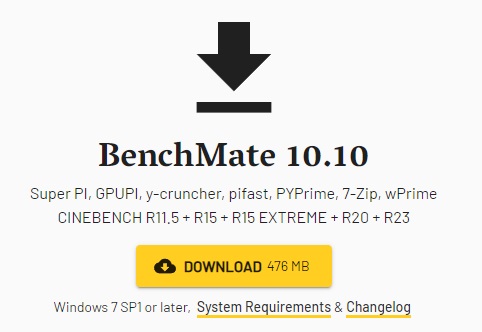

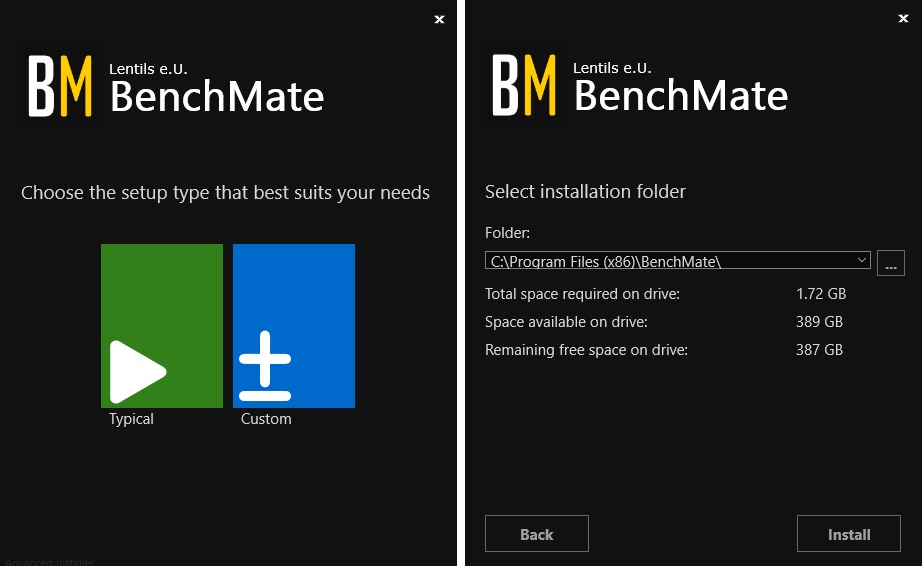

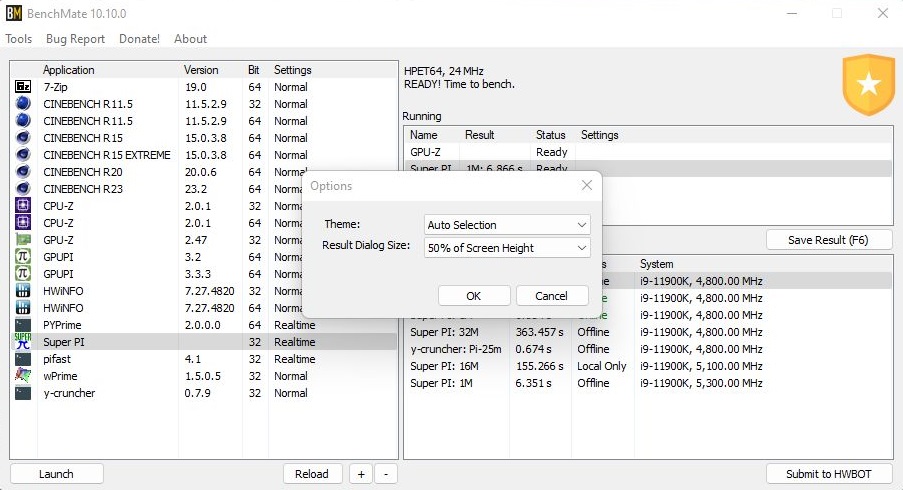

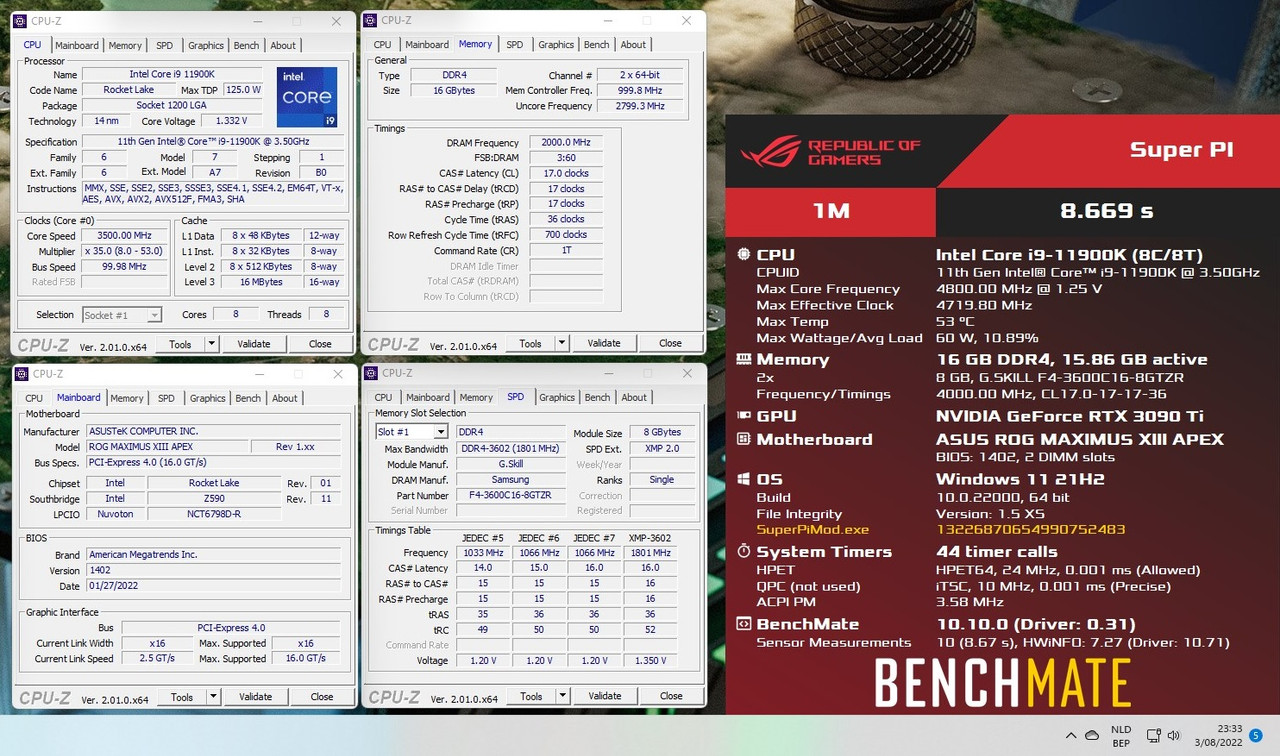

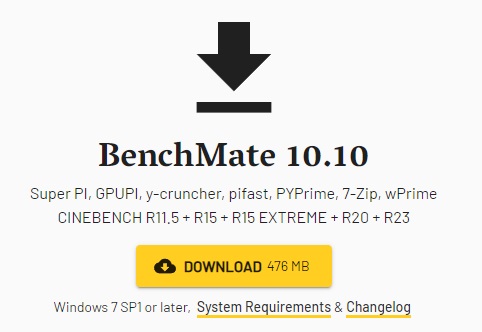

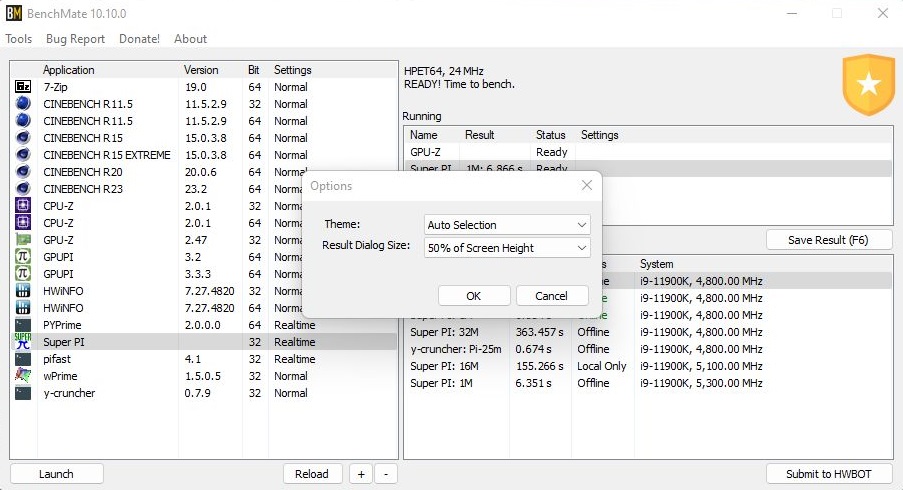

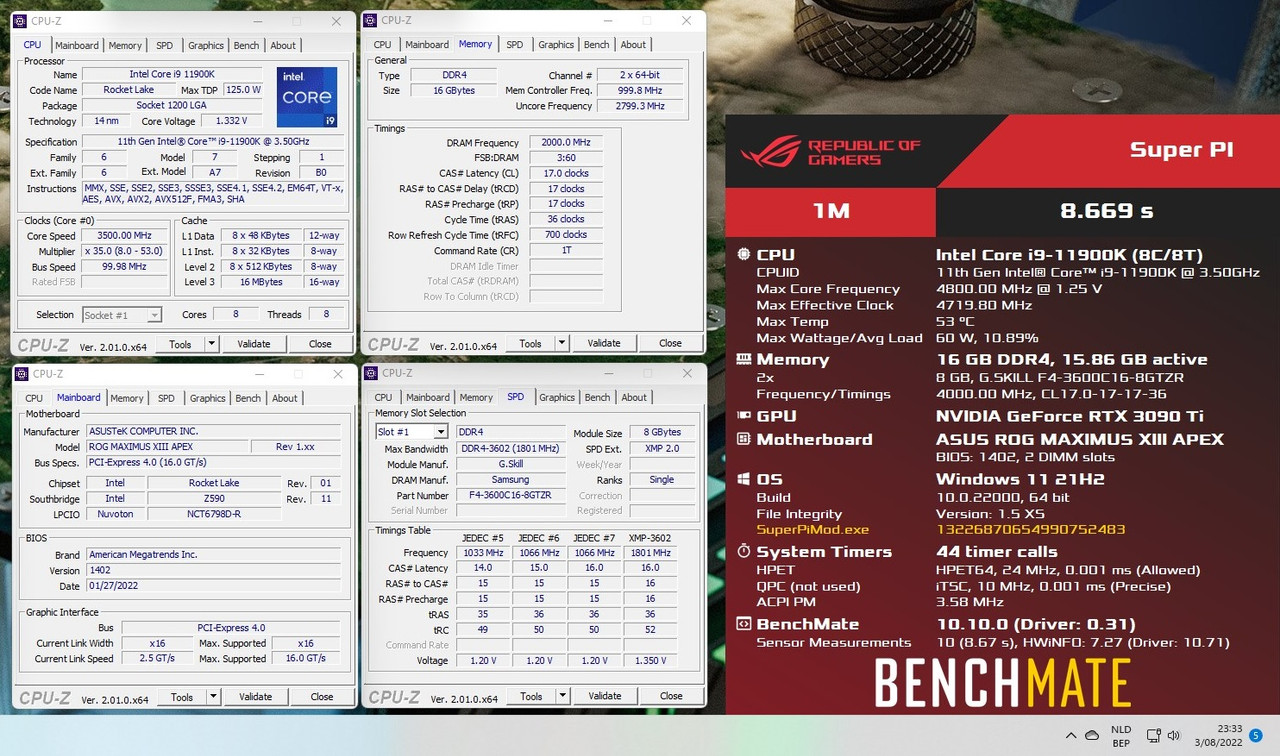

BenchMate 10.10.0 is here, besides the features mentioned in the changelog this new version supports Windows 7 again.

- The installer has been simplified and does this indeed faster than previous versions.

Another bonus is the new configurable Score window. So you have more space to position the required information.

- AUTO

- 50% of screen height

- 75% of screen height

- 100% of screen height

- On the same Score window Mat included a CPU-Z, GPU-Z and HWinfo button to facilitate opening the required

information for the verification screen.

- Another great fix ( at least on my setup ) is that the Anti-Virus doesn't cause an error anymore when launching eg Y-

Cruncher or Wprime benchmark.

Thanks Mat for the new version of your popular bench software, users will applaud the Windows 7 support and hopefully we can merge all the "With BenchMate benchmarks" with the original benchmarks soon!

If you want to support Mat for all his hard work you can become a Patreon member or just donate a few bucks.

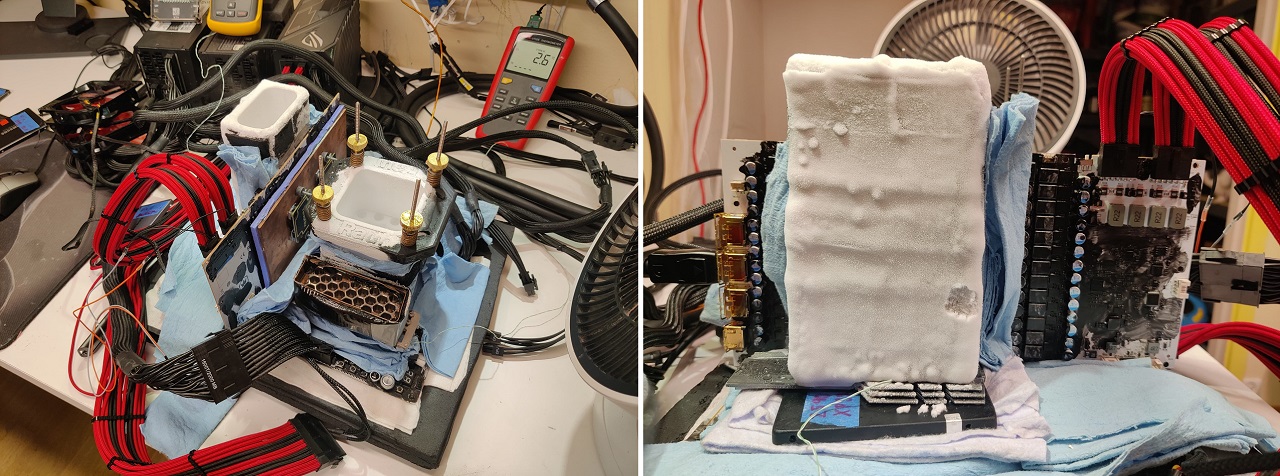

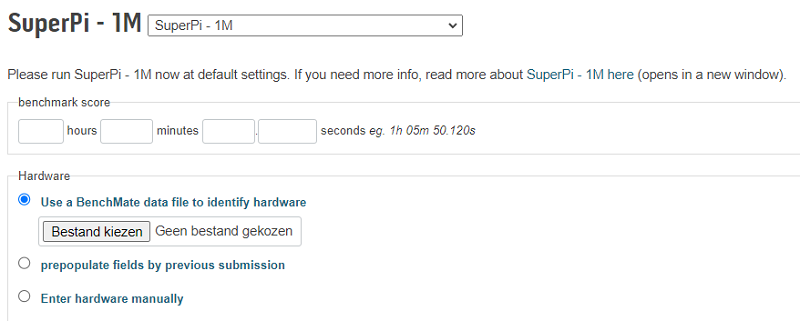

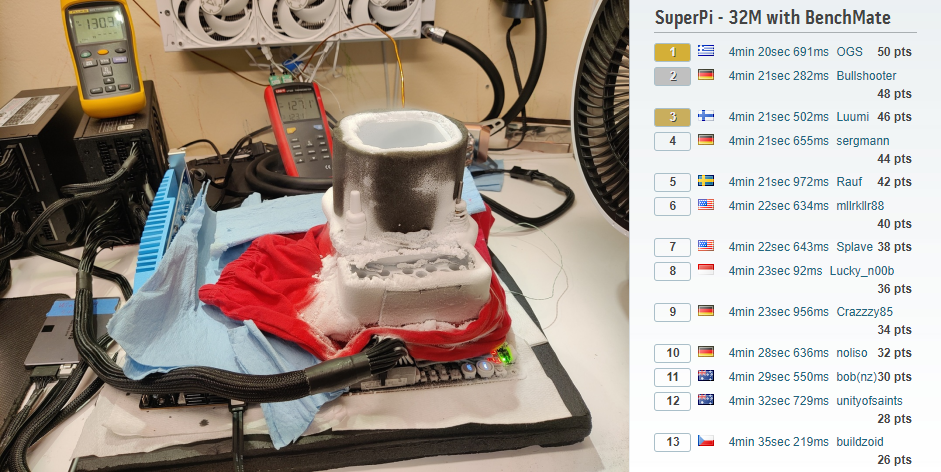

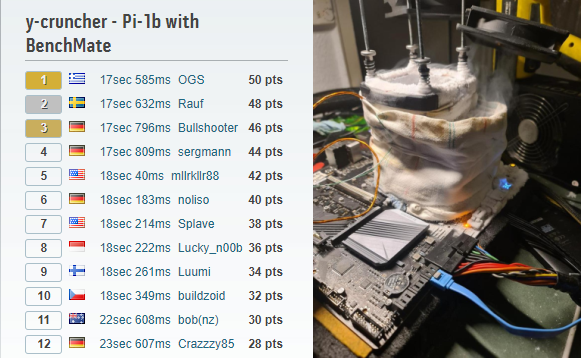

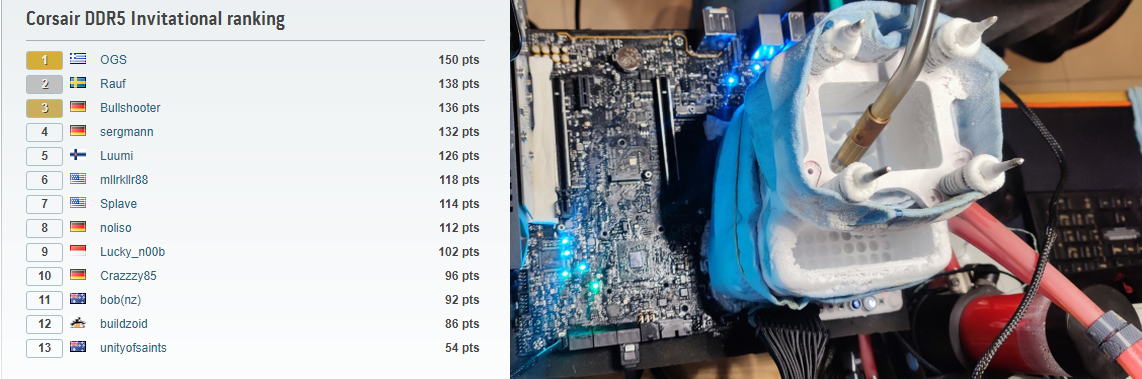

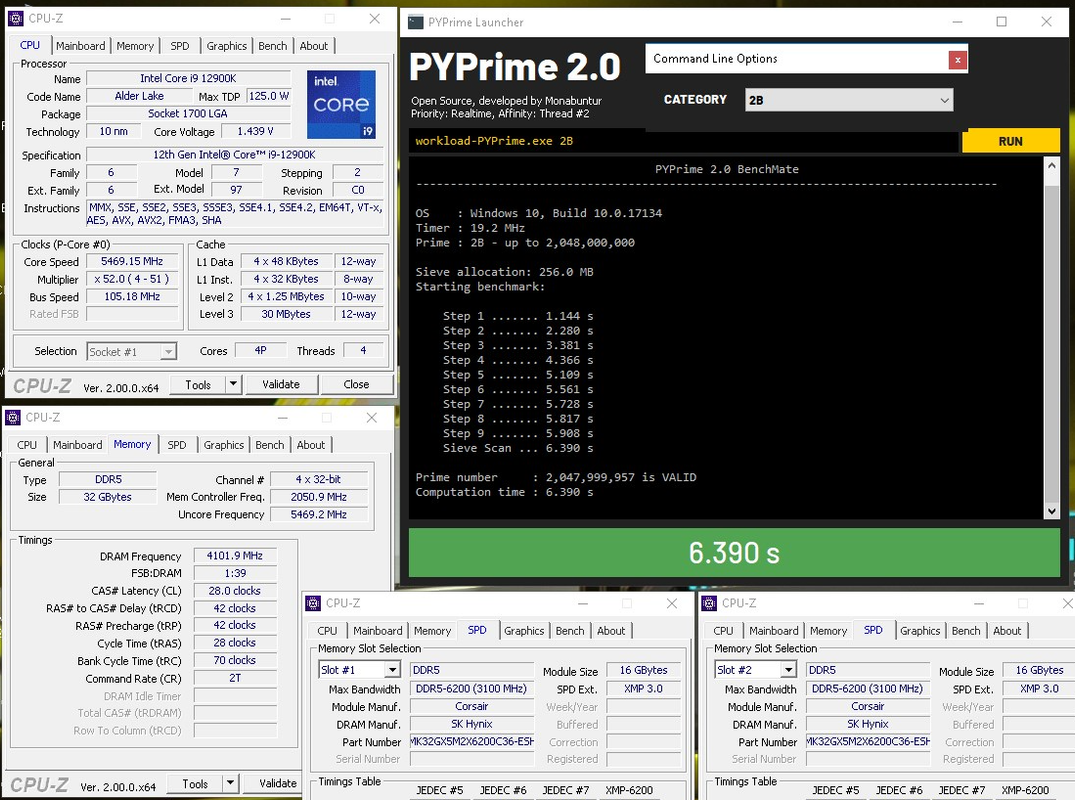

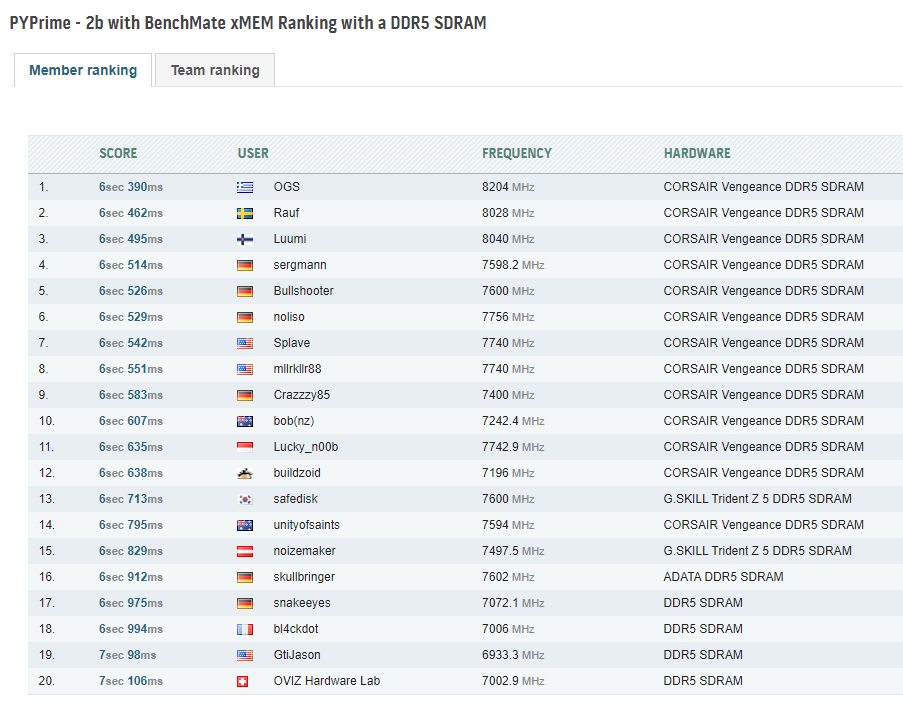

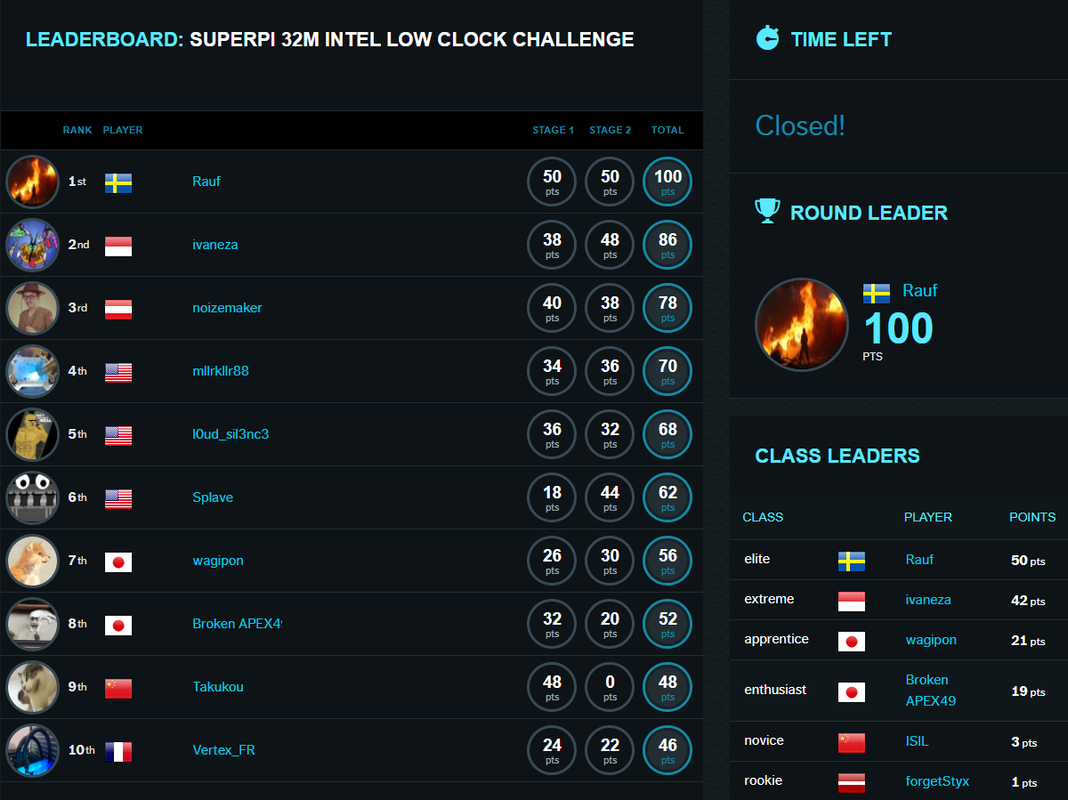

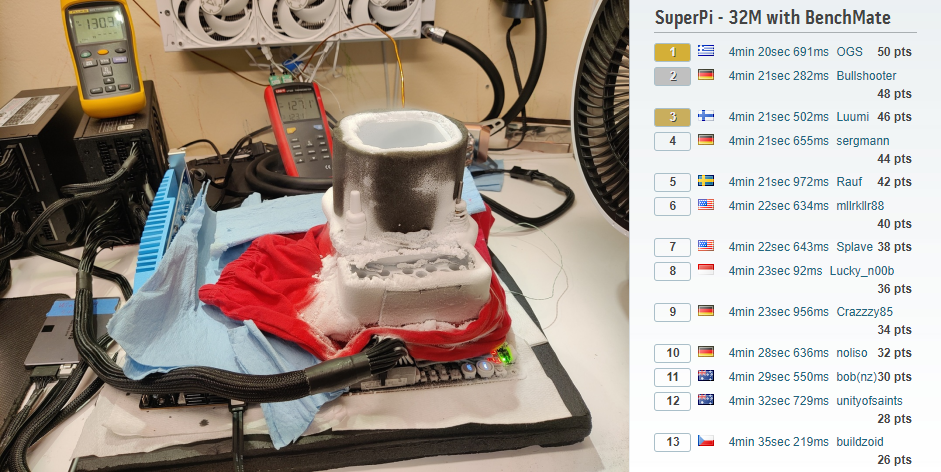

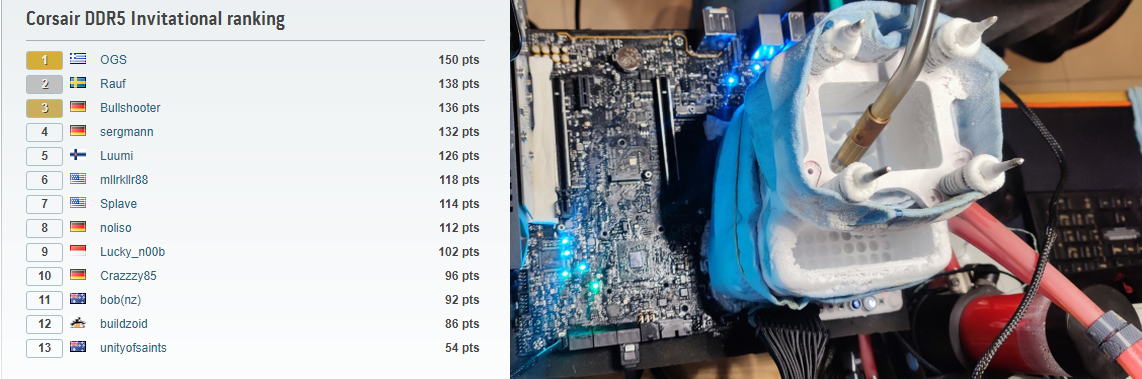

After dominating Stage 1 of the "Corsair DDR5 Invitational" Greek Overclockers Stavros Savvopoulos and Phil Strecker from Team Overclocked Gaming Systems also grabbed first spot in both Stage 2 and 3.

Stage 2 featured all time classic SuperPi 32M, a benchmark where every MHz of ram and tightening the memory timings can just give one the edge to claim victory, albeit by a mere milliseconds.

Looking at the final results we see the top 5 within a second, the ASRock OCers had to throw in the towel as their boards apparently couldn't clock the Corsair Vengeance Memory as high as the ASUS, eVGA and Gigabyte motherboards with the current available bios version.

After Stage 2, Team OGS was comfortably in the lead with 100 pts, followed by Luumi with 92 pts and Bullshooter and Rauf in a shared 3rd spot with both 90 pts. Sergmann trailed the duo with just 2 pts.

If we look at the used motherboards by the aforementioned OCers:

- OGS - ASUS ROG Maximus Z690 Apex

- Luumi - eVGA Z690 Dark K!ngp!n

- Bullshooter - Gigabyte Z690 AORUS Tachyon

- Rauf - ASUS ROG Maximus Z690 Apex

- Sergmann - Gigabyte Z690 AORUS Tachyon

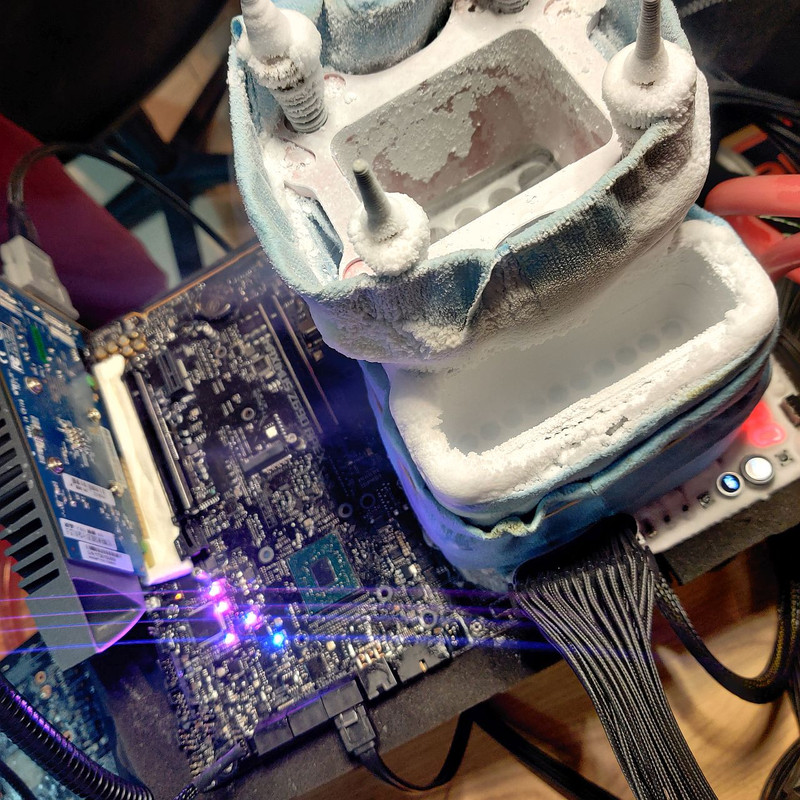

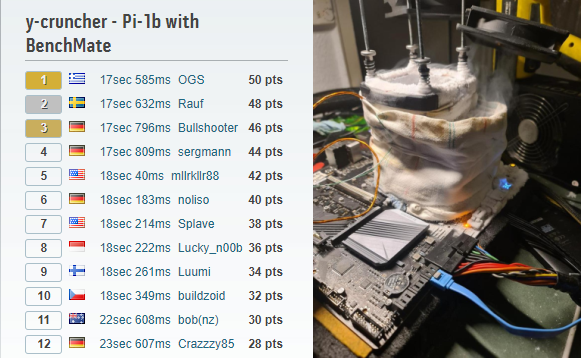

For Stage 3 Team OGS just had to finish in the top 5 to grab the overal win, though for spot 2 till 5 nothing was final. Benchmark of choice was AIDA64, though a few bugs were quickly discovered. So the participants had to agree on another benchmark. Y-cruncher 1B was picked out of the alternative proposed benchmarks. Proper AVX support was a must from both the used CPU and from the motherboard bios. Both the eVGA Dark and ASRock Aqua OC users were up to a real challenge here as their Biosses were not optimised for this. Nevertheless mllrkller88, Splave and Luumi put down some solid scores, but could never compete with the rest. This was especially a bummer for Luumi who could have consolidated in the Top 3.

Y-Cruncher is not only about raw clocks, but also the platform stability plays a huge role here. Ofcourse the regular tweaks applied here and there will make a difference too. Team OGS snatching once more the top spot, closely followed by Swedish OCer Rauf. Bullshooter from Germany finished in 3rd spot. Leading to the below final ranking of the Corsair DDR5 Invitational:

A huge congratz to all participants, who played it fair, showing great sportsmanship and maxing out their platforms and the kindly provided Corsair DDR5 Vengeance memory sticks. The top 3 will be contacted for shipping details.

Big shoutout to Corsair for making the two competitions possible.

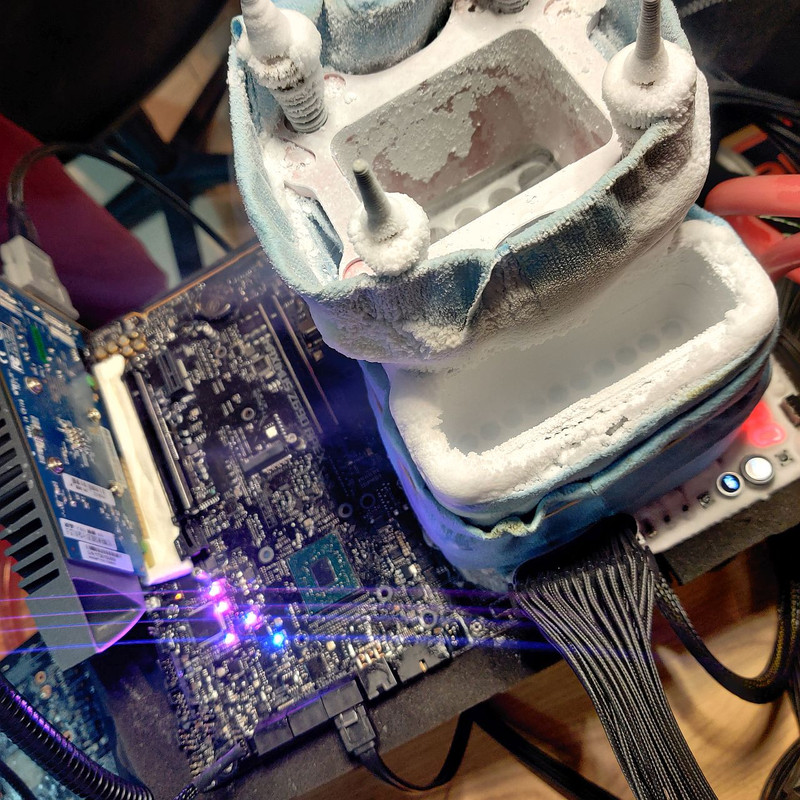

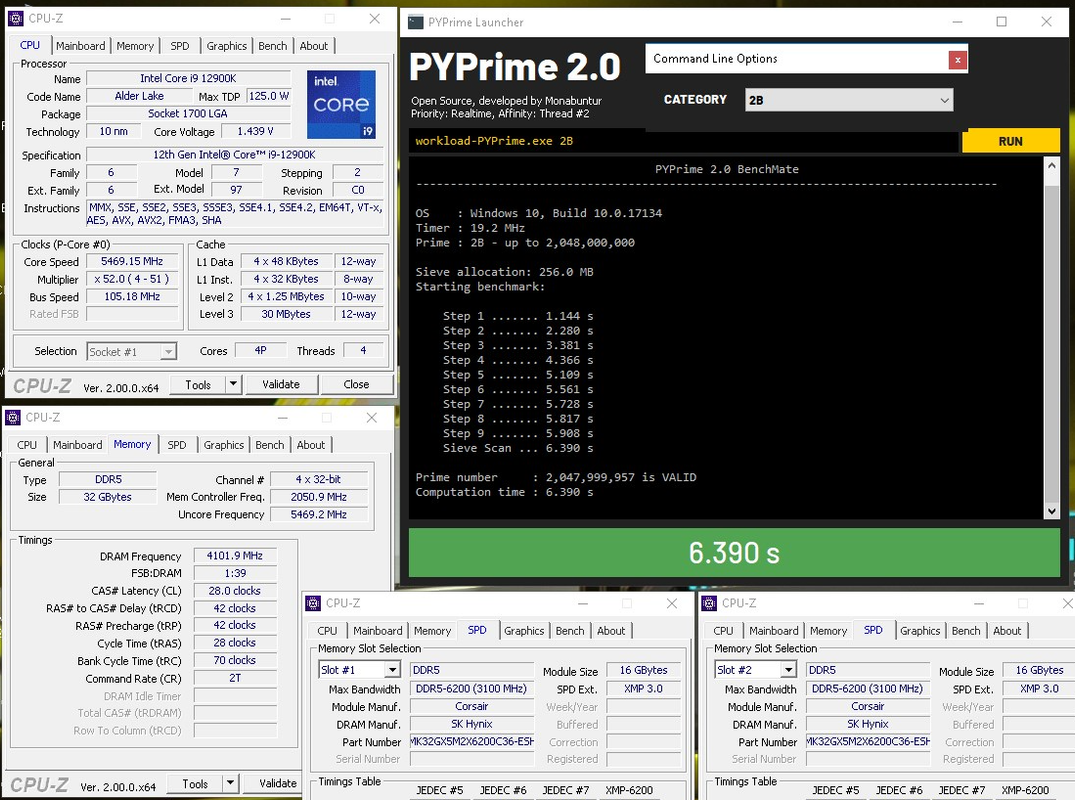

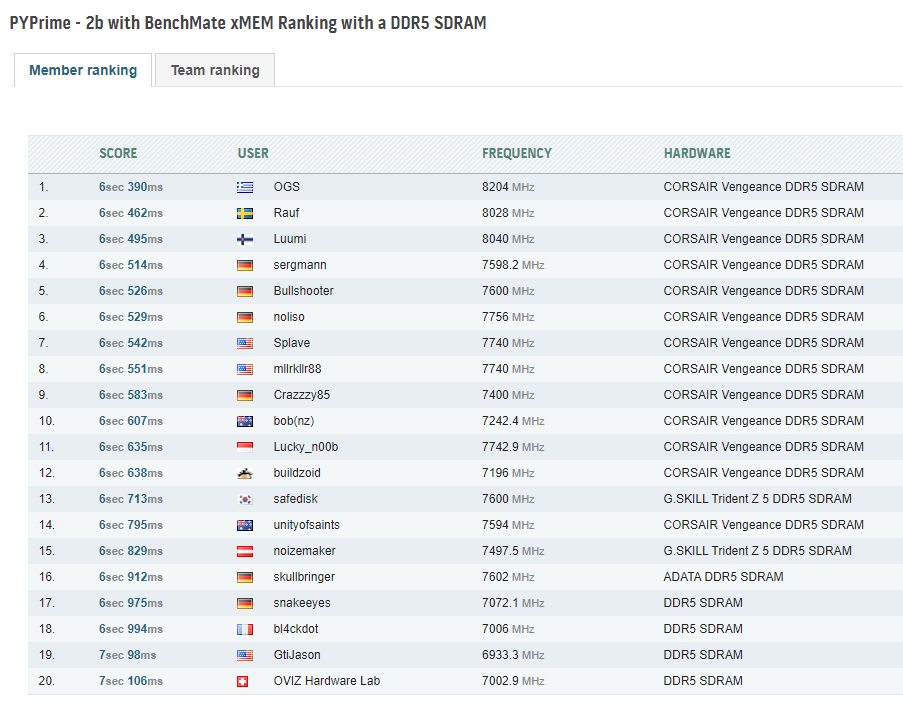

Stage 1 of the Corsair DDR5 invitational was dominated by Greek Overclocker Stavros Savvopoulos from Team Overclocked Gaming Systems. Being the first to drop below the 6sec 400ms mark. Maybe you think that he just got lucky with 2 excellent sticks that clocked up higher than the rest, however Stavros is a true Tweaker pur sang that always manages to combine high frequencies with pretty darn good efficiency. Therefore he always does a lot of pretesting before going cold.

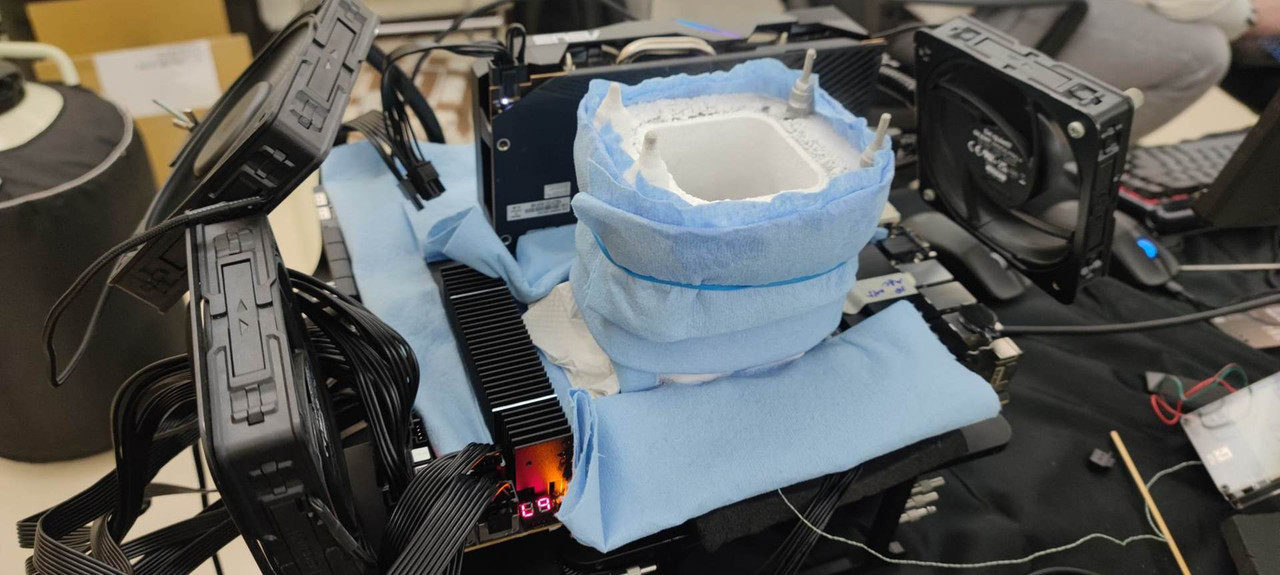

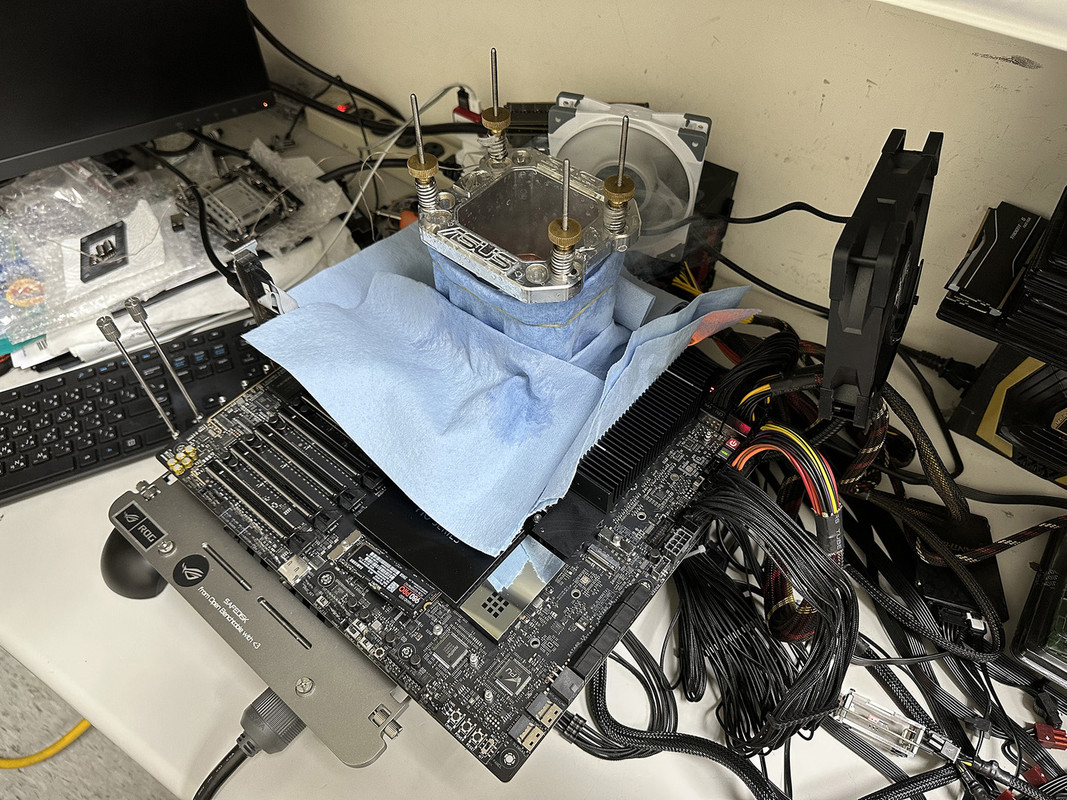

He pushed his liquid nitrogen cooled Corsair mem kit over 8200 MT/s to obliterate the previous PYPrime DDR5 World Record from in-house ASUS overclocker Safedisk, even though the Stage had limited clocks for both the processor and cache frequencies. Meaning even faster scores are possible in the future. His ASUS ROG Maximus Z690 Apex motherboard is fully vaseline insulated to withstand the frosty LN2 benching.

Congratz Harry (and Phil)!

Now the current PYPrime ranking is nearly dominated by all the Corsair invited participants, perfectly reflecting their skills.

Stage 2 is ongoing, but the current scores are just placeholders for many, just a matter of a few days before we can see if the 4min 20sec mark can be reached.

The HWBot 2022 Challenger Round 1 series have started and running till the 30th of April. For this edition we stepped away from the core based divisions but based it now on the memory and/or CPU manufacturer.

- Division 1 is for DDR5 platforms only

- Division 2 is for DDR4 and AMD based platforms

- Division 3 is for DDR4 and Intel based platforms

- Division 4 is for DDR3 and AMD based platforms

- Division 5 is for DDR3 and Intel based platforms

- Division 6 is for DDR2 platforms

- Division 7 is for good old DDR legacy based platforms

The official competition background is another marvel from DaQuteness

INTEL Alder Lake 12600K & 12600KF

|

|

Benchmark |

Score |

Overclocker |

Motherboard |

Memory |

|

| GFP |

Cinebench - 2003 |

10xCPU |

10881 |

|

Splave |

ASRock Z690 Aqua OC |

Kingston |

|

| GFP |

Cinebench - R11.5 |

10xCPU |

45.78 |

|

Shar00750 |

ASRock Z690 Aqua OC |

G.Skill Trident Z 5 |

|

| GFP |

Cinebench - R15 |

10xCPU |

3993 |

|

P5ycho |

ROG Maximus Z690 Apex |

TeamGroup |

|

| GFP |

Cinebench - R20 |

10xCPU |

10254 |

|

P5ycho |

ROG Maximus Z690 Apex |

TeamGroup |

|

| GFP |

Cinebench - R23 |

10xCPU |

26140 |

|

Baby-J |

MSI MEG Z690 Unify-X |

Kingston |

|

| GFP |

Geekbench 3 Multi Core |

10xCPU |

78194 |

|

P5ycho |

ROG Maximus Z690 Apex |

TeamGroup

|

|

|

Geekbench 3 Single Core |

10xCPU |

10750 |

|

shar00750 |

ASRock Z690 Aqua OC |

Kingston

|

|

|

Geekbench 4 Single Core |

10xCPU |

11973 |

|

Splave |

ASRock Z690 Aqua OC |

Kingston

|

|

|

Geekbench 5 Single Core |

10xCPU |

2783 |

|

Splave |

ASRock Z690 Aqua OC |

Kingston

|

|

| GFP |

Geekbench 5 Multi Core |

10xCPU |

19287 |

|

Splave |

ASRock Z690 Aqua OC |

Kingston

|

|

| GFP |

HWBot X265 4K |

10xCPU |

32.665 |

|

Splave |

ASRock Z690 Aqua OC |

Kingston

|

|

| GFP |

HWBot X265 1080P |

10xCPU |

135.72 |

|

shar00750 |

ASRock Z690 Aqua OC |

Kingston

|

|

| GFP |

PCMark 10 Express |

10xCPU |

10792 |

|

L0ud Silence |

ASRock Z690 Aqua OC |

Klevv

|

|

|

Pifast |

10xCPU |

8sec470 |

|

Splave |

ASRock Z690 Aqua OC |

Kingston

|

|

|

SuperPi 1M |

10xCPU |

5sec057 |

|

Baby-J |

MSI MEG Z690 Unify-X |

Kingston

|

|

|

SuperPi 32M |

10xCPU |

4min03sec |

|

Baby-J |

MSI MEG Z690 Unify-X |

Kingston

|

|

|

Y-Cruncher Pi-1B |

10xCPU |

23sec409 |

|

Splave |

ASRock Z690 Aqua OC |

Kingston

|

|

| GFP |

GPUPI for CPU - 1B |

10xCPU |

1min8sec |

|

P5ycho |

ROG Maximus Z690 Apex |

TeamGroup

|

|

| GFP |

XTU 2.0 |

10xCPU |

9058 |

|

P5ycho |

ROG Maximus Z690 Apex |

|

|

| (Table as of March 14, 2022. Source: hwbot.org database) |

INTEL Pentium Gold G7400 & G7400T

|

|

Benchmark |

Score |

Overclocker |

Motherboard |

Memory |

|

| GFP |

Cinebench - R11.5 |

2xCPU |

10.73 |

|

Nik |

Asus ROG Z690 Apex |

G.Skill Trident Z 5 |

|

| GFP |

Cinebench - R15 |

2xCPU |

935 |

|

Nik |

Asus ROG Z690 Apex |

G.Skill Trident Z 5 |

|

| GFP |

Cinebench - R20 |

2xCPU |

2532 |

|

Safedisk |

Asus ROG Z690 Apex |

G.Skill Trident Z 5 |

|

| GFP |

Cinebench - R23 |

2xCPU |

6438 |

|

Safedisk |

Asus ROG Z690 Apex |

G.Skill Trident Z 5

|

|

| GFP |

Geekbench 3 Multi Core |

2xCPU |

22265 |

|

Safedisk |

Asus ROG Z690 Apex |

G.Skill Trident Z 5

|

|

| GFP |

Geekbench 4 Multi Core |

2xCPU |

23405 |

|

Safedisk |

Asus ROG Z690 Apex |

G.Skill Trident Z 5

|

|

| GFP |

Geekbench 5 Multi Core |

2xCPU |

5721 |

|

Safedisk |

Asus ROG Z690 Apex |

G.Skill Trident Z 5

|

|

| GFP |

HWBot X265 1080P |

2xCPU |

34.615 |

|

Nik |

Asus ROG Z690 Apex |

G.Skill Trident Z 5

|

|

| GFP |

HWBot X265 4K |

2xCPU |

8.18 |

|

Safedisk |

Asus ROG Z690 Apex |

G.Skill Trident Z5

|

|

| GFP |

GPUPI CPU 100M |

2xCPU |

13sec803 |

|

Nik |

Asus ROG Z690 Apex |

G.Skill Trident Z5

|

|

| GFP |

GPUPI CPU 1B |

2xCPU |

4min22sec |

|

Safedisk |

Asus ROG Z690 Apex |

G.Skill Trident Z 5

|

|

| GFP |

GPUPI v3.3 CPU 100M |

2xCPU |

14sec365 |

|

Safedisk |

Asus ROG Z690 Apex |

G.Skill Trident Z5

|

|

| GFP |

GPUPI v3.3 CPU 1B |

2xCPU |

4min25sec |

|

Safedisk |

Asus ROG Z690 Apex |

G.Skill Trident Z5

|

|

| GFP |

PCMARK 7 |

2xCPU |

10588 |

|

OViZ HW Labs |

MSI MEGZ690 Unify-X |

T-Force Delta

|

|

| GFP |

PCMARK 10 Ex |

2xCPU |

10047 |

|

OViZ HW Labs |

MSI MEGZ690 Unify-X |

T-Force Delta

|

|

| GFP |

PCMARK 10 |

2xCPU |

9192 |

|

OViZ HW Labs |

MSI MEGZ690 Unify-X |

T-Force Delta

|

|

| GFP |

Y-Cruncher - Pi - 25M |

2xCPU |

8sec833 |

|

Safedisk |

Asus ROG Z690 Apex |

G.Skill Trident Z5

|

|

| GFP |

Y-Cruncher - Pi - 1B |

2xCPU |

58sec996 |

|

Safedisk |

Asus ROG Z690 Apex |

G.Skill Trident Z5

|

|

| GFP |

Y-Cruncher - Pi - 2.5B |

2xCPU |

2min48sec |

|

Safedisk |

Asus ROG Z690 Apex |

G.Skill Trident Z5

|

|

| (Table as of March 14, 2022. Source: hwbot.org database) |

Intel's Pentium Gold G7400 is dominating the HWBOT frontpage, This dual core, non K SKU, Alder Lake provides cheap fun and is the main interest of the current benching mayhem.

INTEL Pentium Gold G7400 & G7400T

|

|

Benchmark |

Score |

Overclocker |

Motherboard |

Memory |

|

| GFP |

Cinebench - R11.5 |

2xCPU |

10.09 |

|

Hicookie |

Gigabyte Z690 Aorus Tachyon |

Gigabyte |

|

| GFP |

Cinebench - R15 |

2xCPU |

876 |

|

Zarok77 |

Asus ROG Z690 Apex |

G.Skill Trident Z 5 |

|

| GFP |

Cinebench - R20 |

2xCPU |

2478 |

|

Safedisk |

Asus ROG Z690 Apex |

G.Skill Trident Z 5 |

|

| GFP |

Cinebench - R23 |

2xCPU |

6090 |

|

Sergmann |

Gigabyte Z690 Aorus Tachyon |

Gigabyte

|

|

| GFP |

Geekbench 3 Multi Core |

2xCPU |

21983 |

|

Safedisk |

Asus ROG Z690 Apex |

G.Skill Trident Z 5

|

|

| GFP |

Geekbench 4 Multi Core |

2xCPU |

21007 |

|

OViZ HW Labs |

MSI MEGZ690 Unify-X |

T-Force Delta

|

|

| GFP |

Geekbench 5 Multi Core |

2xCPU |

5325 |

|

OViZ HW Labs |

MSI MEGZ690 Unify-X |

T-Force Delta

|

|

| GFP |

HWBot X265 1080P |

2xCPU |

32.092 |

|

OViZ HW Labs |

MSI MEGZ690 Unify-X |

T-Force Delta

|

|

| GFP |

HWBot X265 4K |

2xCPU |

8.18 |

|

Safedisk |

Asus ROG Z690 Apex |

G.Skill Trident Z5

|

|

| GFP |

GPUPI CPU 100M |

2xCPU |

15sec169 |

|

OViZ HW Labs |

MSI MEGZ690 Unify-X |

T-Force Delta

|

|

| GFP |

GPUPI CPU 1B |

2xCPU |

4min22sec |

|

Safedisk |

Asus ROG Z690 Apex |

G.Skill Trident Z 5

|

|

| GFP |

GPUPI v3.3 CPU 100M |

2xCPU |

15sec149 |

|

OViZ HW Labs |

MSI MEGZ690 Unify-X |

T-Force Delta

|

|

| GFP |

GPUPI v3.3 CPU 1B |

2xCPU |

5min2sec |

|

OViZ HW Labs |

MSI MEGZ690 Unify-X |

T-Force Delta

|

|

| GFP |

PCMARK 7 |

2xCPU |

10588 |

|

OViZ HW Labs |

MSI MEGZ690 Unify-X |

T-Force Delta

|

|

| GFP |

PCMARK 10 Ex |

2xCPU |

10047 |

|

OViZ HW Labs |

MSI MEGZ690 Unify-X |

T-Force Delta

|

|

| GFP |

PCMARK 10 |

2xCPU |

9192 |

|

OViZ HW Labs |

MSI MEGZ690 Unify-X |

T-Force Delta

|

|

| GFP |

Y-Cruncher - Pi - 25M |

2xCPU |

1sec179 |

|

OViZ HW Labs |

MSI MEGZ690 Unify-X |

T-Force Delta

|

|

| GFP |

Y-Cruncher - Pi - 1B |

2xCPU |

1min2sec |

|

Bullshooter |

Gigabyte Z690 Aorus Tachyon |

Gigabyte

|

|

| GFP |

Y-Cruncher - Pi - 2.5B |

2xCPU |

3min12sec |

|

Sergmann |

Gigabyte Z690 Aorus Tachyon |

Gigabyte

|

|

| (Table as of March 12, 2022. Source: hwbot.org database) |

The top ranking seemed to be almost exlusively limited to Gigabyte AORUS Z690 Tachyon Motherboard users, MSI is back with the MEG Z690 Unify-X and ASUS with their ROG Z690 Apex.

Seems Team ASUS found some golden gem G4700 Processors, p0wning previous scores as they can clock well over 6.2GHz in most benchmarks.

INTEL Pentium Gold G7400 & G7400T

|

|

Benchmark |

Score |

Overclocker |

Motherboard |

Memory |

|

| GFP |

Cinebench - R11.5 |

2xCPU |

10.73 |

|

Nik |

Asus ROG Z690 Apex |

G.Skill Trident Z 5 |

|

| GFP |

Cinebench - R15 |

2xCPU |

935 |

|

Nik |

Asus ROG Z690 Apex |

G.Skill Trident Z 5 |

|

| GFP |

Cinebench - R20 |

2xCPU |

2532 |

|

Safedisk |

Asus ROG Z690 Apex |

G.Skill Trident Z 5 |

|

| GFP |

Cinebench - R23 |

2xCPU |

6438 |

|

Safedisk |

Asus ROG Z690 Apex |

G.Skill Trident Z 5

|

|

| GFP |

Geekbench 3 Multi Core |

2xCPU |

22265 |

|

Safedisk |

Asus ROG Z690 Apex |

G.Skill Trident Z 5

|

|

| GFP |

Geekbench 4 Multi Core |

2xCPU |

23405 |

|

Safedisk |

Asus ROG Z690 Apex |

G.Skill Trident Z 5

|

|

| GFP |

Geekbench 5 Multi Core |

2xCPU |

5721 |

|

Safedisk |

Asus ROG Z690 Apex |

G.Skill Trident Z 5

|

|

| GFP |

HWBot X265 1080P |

2xCPU |

34.615 |

|

Nik |

Asus ROG Z690 Apex |

G.Skill Trident Z 5

|

|

| GFP |

HWBot X265 4K |

2xCPU |

8.18 |

|

Safedisk |

Asus ROG Z690 Apex |

G.Skill Trident Z5

|

|

| GFP |

GPUPI CPU 100M |

2xCPU |

13sec803 |

|

Nik |

Asus ROG Z690 Apex |

G.Skill Trident Z5

|

|

| GFP |

GPUPI CPU 1B |

2xCPU |

4min22sec |

|

Safedisk |

Asus ROG Z690 Apex |

G.Skill Trident Z 5

|

|

| GFP |

GPUPI v3.3 CPU 100M |

2xCPU |

14sec365 |

|

Safedisk |

Asus ROG Z690 Apex |

G.Skill Trident Z5

|

|

| GFP |

GPUPI v3.3 CPU 1B |

2xCPU |

4min25sec |

|

Safedisk |

Asus ROG Z690 Apex |

G.Skill Trident Z5

|

|

| GFP |

PCMARK 7 |

2xCPU |

10588 |

|

OViZ HW Labs |

MSI MEGZ690 Unify-X |

T-Force Delta

|

|

| GFP |

PCMARK 10 Ex |

2xCPU |

10047 |

|

OViZ HW Labs |

MSI MEGZ690 Unify-X |

T-Force Delta

|

|

| GFP |

PCMARK 10 |

2xCPU |

9192 |

|

OViZ HW Labs |

MSI MEGZ690 Unify-X |

T-Force Delta

|

|

| GFP |

Y-Cruncher - Pi - 25M |

2xCPU |

8sec833 |

|

Safedisk |

Asus ROG Z690 Apex |

G.Skill Trident Z5

|

|

| GFP |

Y-Cruncher - Pi - 1B |

2xCPU |

58sec996 |

|

Safedisk |

Asus ROG Z690 Apex |

G.Skill Trident Z5

|

|

| GFP |

Y-Cruncher - Pi - 2.5B |

2xCPU |

2min48sec |

|

Safedisk |

Asus ROG Z690 Apex |

G.Skill Trident Z5

|

|

| (Table as of March 14, 2022. Source: hwbot.org database) |

The top ranking is now owned by the ASUS ROG Maximus Z690 Apex Motherboard users, just the MSI Unify-X PC Mark scores by OVIZ Hardware Labs avoid the clean sweep of the chart.

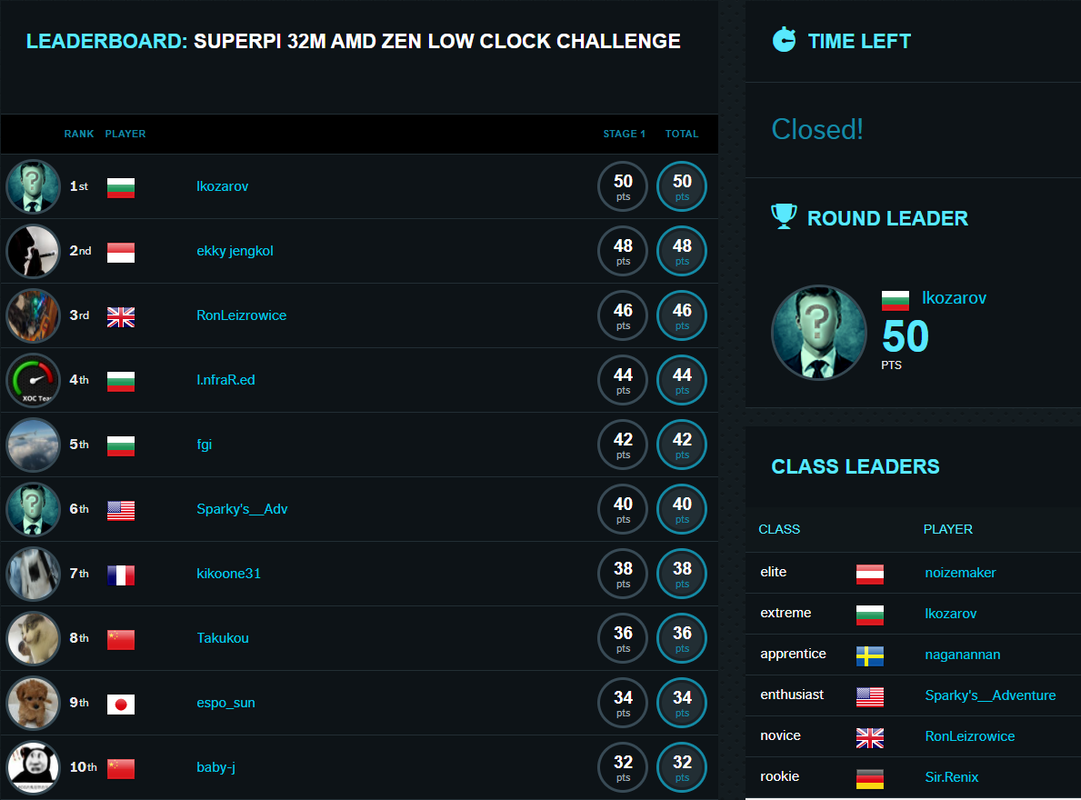

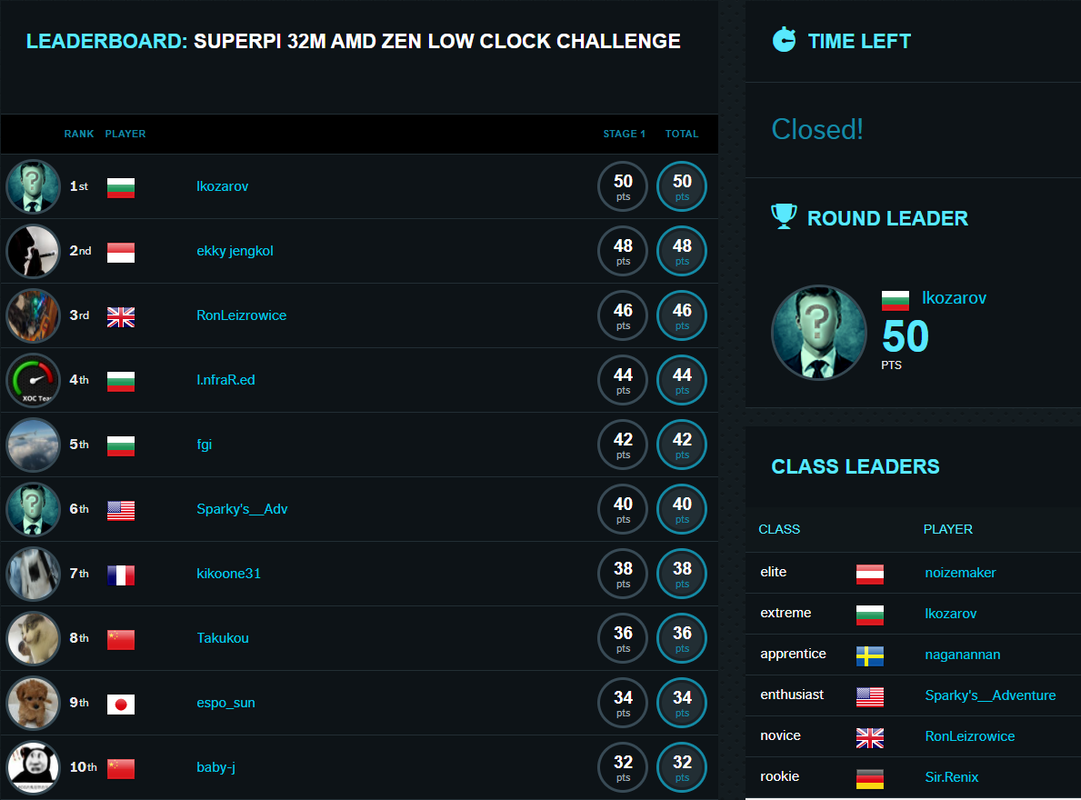

For the AMD Low Clock Challenge competition, the winners are:

Winner: Ikozarov (Bulgaria)

First runner-up: ekky.Jengkol (Indonesia)

Second runner-up: RonLeizrowice (Great Britain)

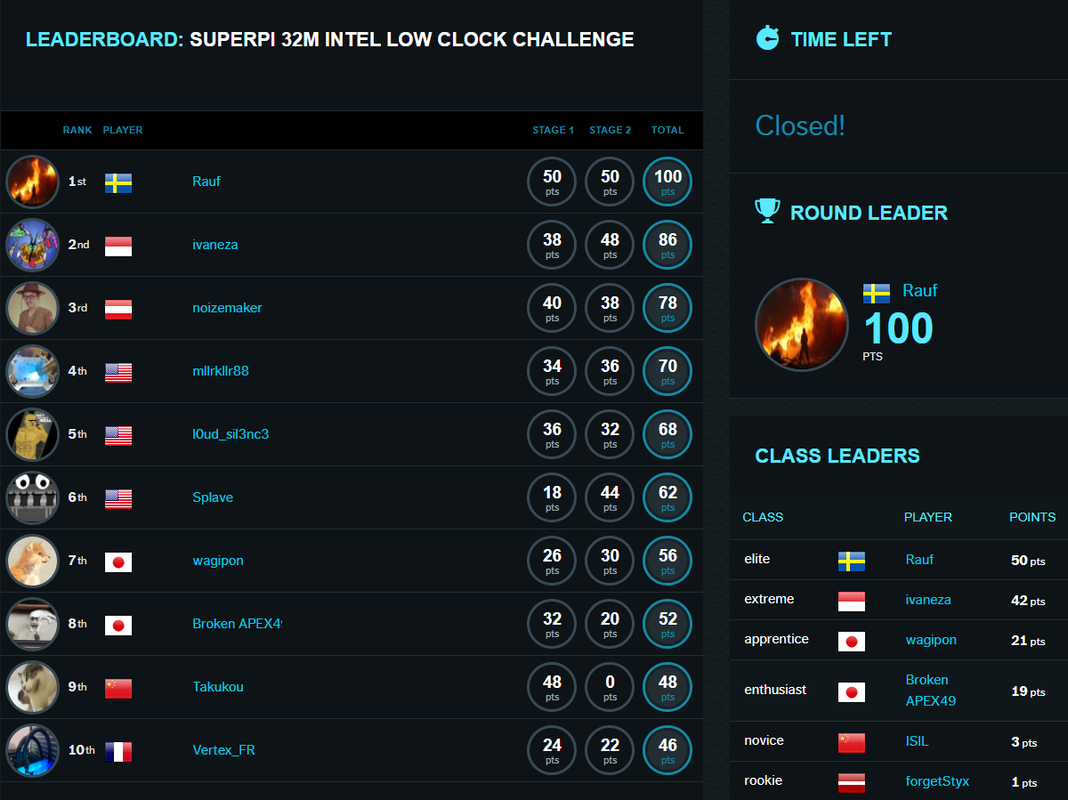

For the Intel Low Clock Challenge the top 3 are:

Winner: Rauf (Sweden)

First runner-up: ivaneza (Indonesia)

Second runner-up: noizemaker (Austria)

The HWBOT crew wishes each one of you a Happy New Year. Fingers crossed 2022 will be a year with lots of new hardware realeases, resulting in top scores, World Records and most of all that overclockers can gather worldwide and just have fun with or without liquid nitrogen!

Cyaz in 2022